Over the past few years, being able to see how digital camera and VFX work lines up with live-action elements, sets and environments has become a critical aspect of many film and television workflows. Casey Schatz, Head of Virtual Production at visualization studio The Third Floor and a collaborator on projects like Avatar: The Way of Water, Game of Thrones and movies in the Marvel Cinematic Universe, has been a key innovator in helping line up these two worlds with film departments and on-set teams.

A new tool aiding this process is the Leica BLK360 for LIDAR scanning.

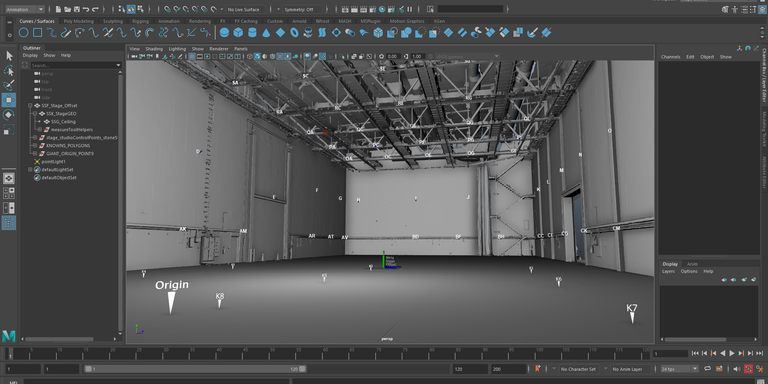

LiDAR scanning fits in with a range of pre-visualization, or “previs,” services, which can include a survey of a set or location to create a virtual environment and virtual cameras that respect the parameters of the actual place.

"Previs is about practicing the creative and technical aspects of the shoot before you set foot on stage,” Casey Schatz, Head of Virtual Production at The Third Floor, told Leica Geosystems. “When you have technically complex shoots and the worlds of construction, art department, and visual effects need to converge, it's best to rehearse digitally ahead of time.”

Schatz started using LiDAR years ago, renting total stations when the shoot required it, before buying a Leica BLK360 G1 when that compact scanner shipped in 2017. He’s now continued the tradition with an all-new BLK360, capturing point clouds to turn into meshes used for virtual production before, during, and after the shooting arrives on the set.

BLK360 on set

“Time is very precious on set,” he continued. “Laser scanning lets us align the digital and real much more efficiently.”

On set, the team would start by taking LiDAR scans of the live-action stage, followed by scans of the set and its relationship to various motion capture marks on the stage floor. This allows the alignment of the motion capture volume — where the camera and actor positions are being tracked — to the physical stage.

“In addition to the stage and the sets, we are also scanning props, the camera gear, lights — any piece of equipment I can get — so the fusion of real and digital is as authentic as possible,” Schatz said. “It makes the integration of the shoot all the more accurate and fast. The idea here is to fix it in pre-production. Let's solve as many of these problems as possible ahead of time.”

This has proved indispensable and cost-efficient while unifying multiple departments involved in the production, from construction to gaffers to grips and all heads of departments that will benefit from having a unified shooting plan.

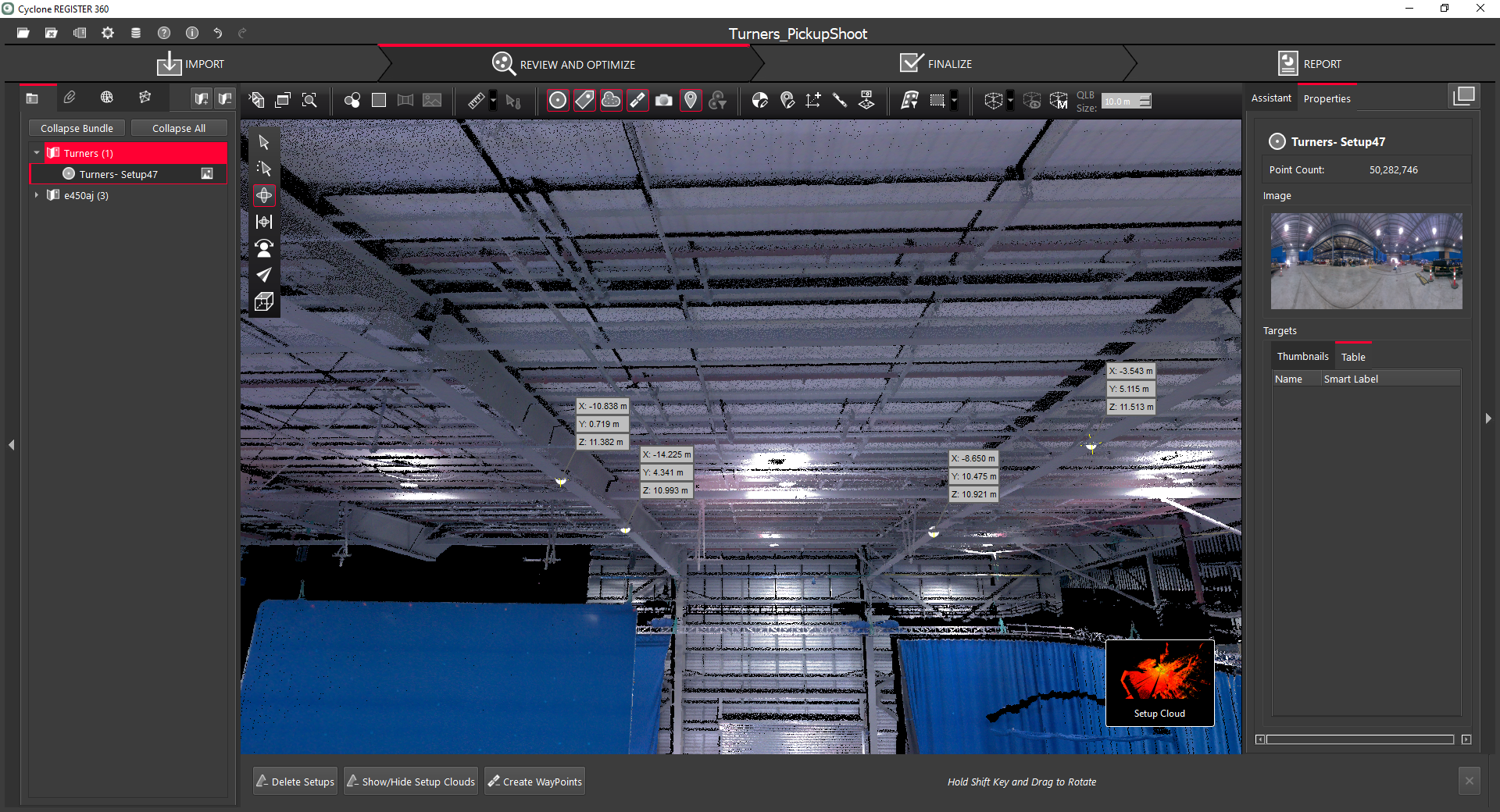

And while the visual effects industry primarily uses 3D meshes, not point clouds, Schatz benefitted from the immediate point cloud feedback available through Leica Cyclone FIELD 360.

“Being able to access the point cloud almost immediately led to workflows I didn't anticipate, like having members of the rigging department next to me and flying through the colored point cloud up to the ceiling and making critical decisions on the fly about pick points and safety concerns,” Schatz said.

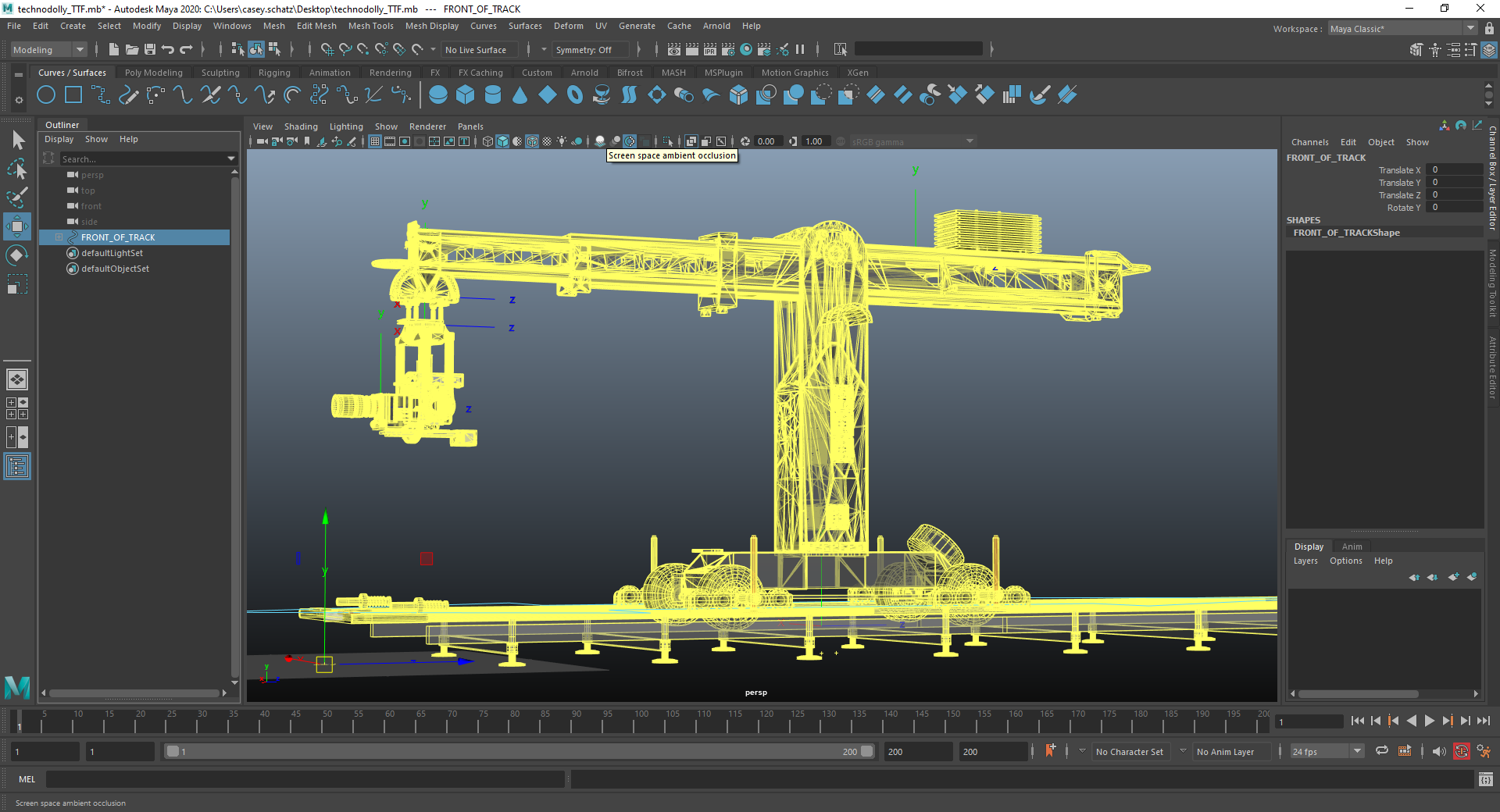

The speed of the laser scanning was particularly vital on Avatar: The Way of Water when employing the eyeline system: a monitor suspended by four cables that could move through space representing a digital character.

“I needed to solve where the cable pick points would be and do so in a way that we can play through the whole scene and the cables don't intersect anything on set,” he continued. “As lights and truss and silks went up, I would scan almost hourly and run the simulation of the eyeline system to check that the cables and the monitor itself would not collide with anything. It's better to find these things out in CG (computer graphics) instead of reality.”

From there, Schatz would process it all almost immediately to get the deliverables he needed.

“On average, I'd say once I sat down at my desk and plugged in the iPad, it was about five to seven minutes to have the point cloud in Leica CYCLONE Register 360, and about 20 minutes later to have a mesh inside of Maya,” Schatz said.

Virtual camera, real results

In CG, the camera is represented just by its nodal point and not the camera body and crane that support it in the real world. But by using the BLK360 to scan the actual camera crane in the environment, TTF’s team was able to run the CG camera move to check for collisions and that it was within the physical parameters of the crane.

“There were a couple of times where I said to everybody a week ahead of time, ‘We might have to wild this wall [an industry term for making a set piece movable—Ed.]’ because it was apparent that the cameras were placed so close to existing structures. So the art department was able to say, ‘OK, we’d better be ready to move this on the day.’”

“We've induced this feedback loop where we can use the LiDAR measurements to say what the best position for the gear is; we scan it, and we then retrofit our CG equipment to obey reality. And the two things are in harmony,” he said.

Lights, camera — LiDAR

As for the future of laser scanning on set, Schatz says the future is bright, especially with the speed and compactness of Leica Geosystems’s BLK devices.

“Part of my job is to be the ambassador between digital and real. The more I scan, the less potential for human error,” Schatz told Leica Geosystems.

“I had previously written off LiDAR as a tool not applicable to the quick pace of live-action shooting,” he said. “You could get incredible results, but the gear was big, expensive, and time-consuming. The BLK has disrupted this assumption, and I'll never be on set without it.”