Dinosaurs walking the earth. On-screen villains turning to dust before our eyes. Classic shots of the Empire State Building and The White House—and the VFX that destroys them on-screen. It’s the stuff of movie magic. Behind the scenes, it’s time consuming and costly to create. That’s why the VFX industry is increasing its use of LiDAR, laser scanning, and reality capture technology to streamline production, create more realistic experiences, and save time and costs.

Dinosaurs walking the earth. On-screen villains turning to dust before our eyes. Classic shots of the Empire State Building and The White House—and the VFX that destroys them on-screen. It’s the stuff of movie magic. Behind the scenes, it’s time consuming and costly to create. That’s why the VFX industry is increasing its use of LiDAR, laser scanning, and reality capture technology to streamline production, create more realistic experiences, and save time and costs.

We caught up with Allan McKay, a VFX supervisor and FX technical director based in Los Angeles who worked on films including Avengers: Endgame, Flight, and Star Trek: Into Darkness, as well as video games like Destiny, Call of Duty, and The Division. He’s also worked closely with Oscar and Emmy nominated directors Robert Zemeckis and M. Night Shayamalan, spoken at conferences like SIGGRAPH and Autodesk University, and given talks at Industrial Light and Magic, Ubisoft, and Activision. McKay is a BLK360 customer, and we were curious to see how he uses the BLK360 at work.

How VFX Works in Media and Entertainment

VFX, along with media and entertainment in general, is an emerging field for LiDAR technology and laser scanning. This is because VFX workflows prior to LiDAR data were often guesswork. McKay said, “The typical process is to take what’s been filmed on set and create a seamless virtual recreation in 3D. The tricky part is that the team has to recreate the entire camera motion and the entire set to make sure everything seamlessly fits in it.”

For example, VFX that are added to camera shots after shooting must follow the exact camera movements throughout a shot so that everything lines up. Otherwise, the scene won’t look right. To address this issue, many VFX pros are forced to take lots of measurements. What often happens is that they have close guesses on how to recreate the motion yet are never assured of 100 percent accuracy. That’s where the BLK360 makes all the difference.

From location scouting to camera movement, the BLK360 offers precise LiDAR and visual data that helps VFX teams produce outstanding work. Before products like the BLK360, production teams without extensive budgets would need to hire survey firms to scan their locations and sets to create data sets that would be used as the foundation for VFX. This was expensive, required additional staff, took more time and left VFX teams relying on someone else’s data every time they wanted to use it.

BLK360 Simplifies and Speeds Up VFX Workflows

The BLK360 takes the guesswork out of VFX workflows and ensures accuracy and speed. McKay took us through an example of a typical VFX workflow using the BLK360:

“Let’s say you have to produce a dinosaur moving through a real-world environment. You have to align everything digitally to the real-world environment, including camera positions and movement,” he said.

Now you can simply survey the environment and use the data to accurately insert your VFX dinosaur, or whatever other asset you create. The BLK360 gives us access to reality in our computers. From there, it’s all about detailing it and using that data, but it’s a chance to capture the environment on that day, in that moment, and have it all there.

One of the most important features of using LiDAR scanners like the BLK360 is the ability not just to capture the dimensions of a set or a location, but to capture exact visual data as well, such as HDR imagery.

“Having all the information that you capture on the day of the shoot, even data from a moment of a shot, speeds up production. You not only capture measurements, but lighting and imagery as well—which helps you line up your VFX to the lighting conditions in the shot. As this tech becomes more accessible, it gives you accuracy and mobility at a good price point,” McKay said.

VFX pros like McKay no longer have to hire survey contractors to scan the space. The BLK360 is simple enough to use that they can do it themselves, and portable enough that they can take it anywhere, from filming on location to the studio. This creates stronger and faster workflows without relying on someone else’s data. “In feature films, this is a huge advantage, especially if the data is going to be reused for sequels, VR rides or other virtual experiences based on the film,” McKay said.

“One scan gives you data to speed up camera tracking and gives you something to build all of your virtual environment assets. Rather than guessing and recreating, you already have the virtual environment to accurately add all the extra pieces to a shot.”

BLK360 Helps Create Reusable VFX Assets

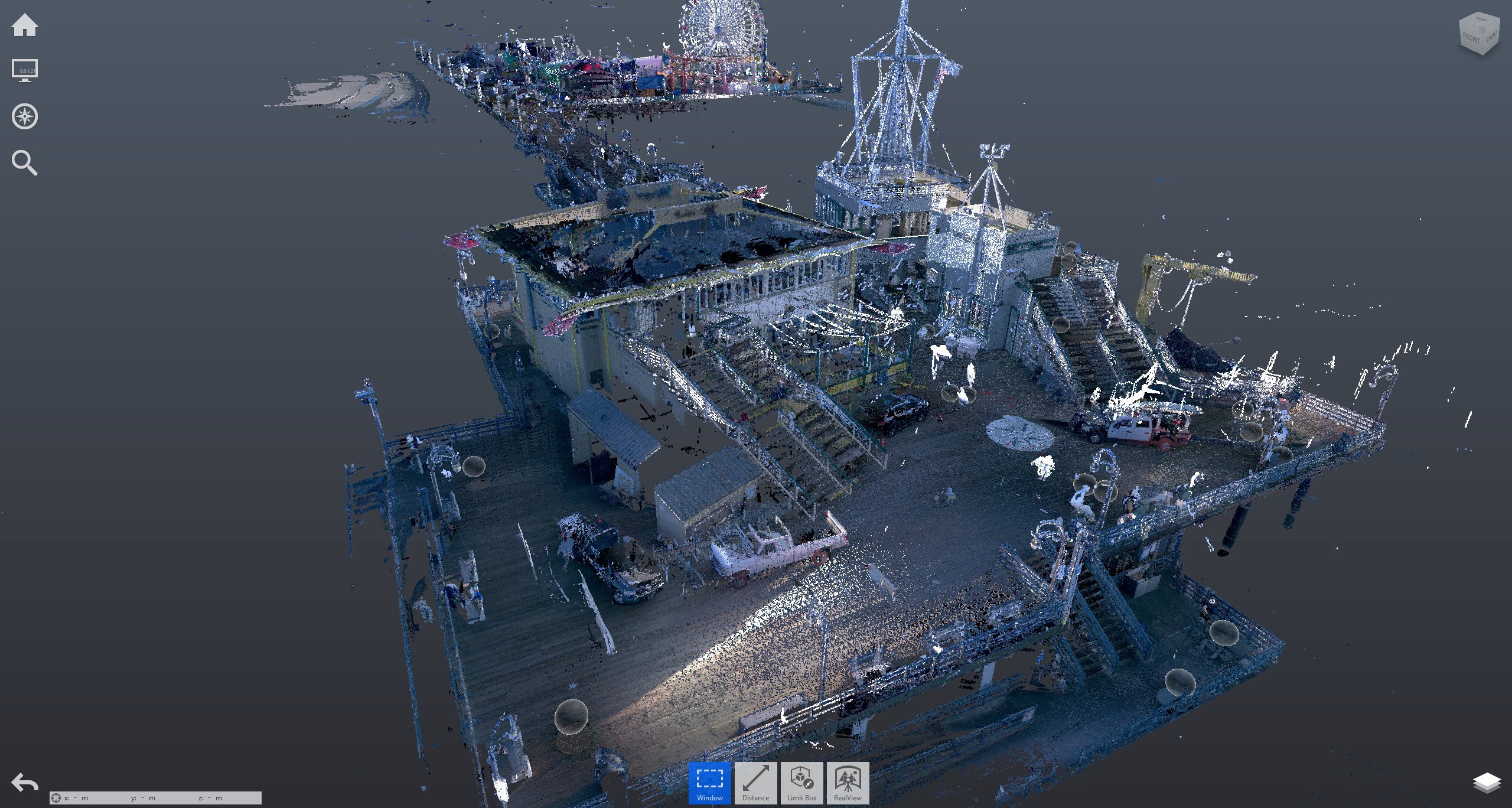

McKay gave us a sneak peek into how the BLK360 plays a role an upcoming film that features a VFX shot of the Santa Monica pier in Los Angeles getting destroyed. Using the BLK360, McKay spent a morning to scan the entire pier by himself in a few hours. Now he has an intricate reference of the location and can go back to his office to build it in VFX and ensure the pier breaks apart the way it would in the real world.

“If I eyeballed the spot or built the set using only photos, it would never be as accurate. Just by having captured that location, multiple studios now want to buy the 3D model of the pier. It’s an asset we can reuse in production because everyone is confident that the model is to scale and accurate,” said McKay. “You can see how others might use a similar approach with a LiDAR scan for frequently used locations and buildings such as the Empire State Building or the White House that can be used in multiple productions.”

BLK360 Allows a VFX Producer to Work Alone and Teach Others

McKay also shares his knowledge and experience with the community by uploading free classes and tutorials on VFX to help upcoming creatives learn more about creating great VFX. One learning module featured his work on Avengers: Endgame. He created a piece of the film having the villain Thanos turn to ash and break apart, known as “decimation.”

He showed how he documented the whole process by shooting an environment around Portland, Oregon. “I used Black Magic’s Pocket Cinema 4K camera, shot High Dynamic Range Images with a film camera, and scanned with the BLK360. I did the entire shoot and production by myself and turned it around within 24 hours. Usually I would need a team and a lot more equipment to do that.”

This example showcases the process and helps bring awareness to LiDAR, photogrammetry and HDRI for lighting, and how it all works together for the VFX industry. “I’m blown away by how I can do all that myself with all the gear I need in one backpack,” McKay added.

The BLK360 is gaining traction in the media and entertainment industry—Francois Chardavoine of Industrial Light and Magic praised the device at HxGN Live and mentioned how it has become an essential part of his team’s toolkits. McKay’s work highlights how the BLK360 can be used by anyone in the media and entertainment industry, and how the data it provides helps professionals to produce outstanding work with more speed, accuracy, and at an accessible price. We’re truly excited to see the next level of VFX that pros like McKay can create using the BLK360.

If you are a VFX creator and would like to learn more from McKay, you can sign up for his free courses here.

Disclaimer: This article features the Leica BLK360 G1. Explore the expanded capabilities of the latest BLK360 model here.